vision.HistogramBasedTracker

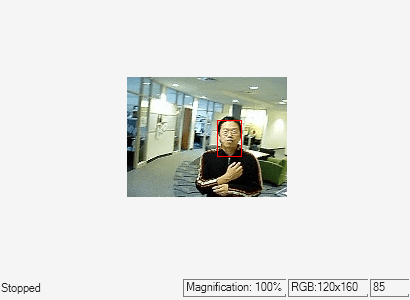

Histogram-based object tracking

Description

The histogram-based tracker incorporates the continuously adaptive mean shift (CAMShift) algorithm for object tracking. It uses the histogram of pixel values to identify the tracked object.

To track an object:

Create the

vision.HistogramBasedTrackerobject and set its properties.Call the object with arguments, as if it were a function.

To learn more about how System objects work, see What Are System Objects?

Creation

Description

hbtracker = vision.HistogramBasedTrackerinitializeObject function to

specify an exemplar image of the object.

hbtracker = vision.HistogramBasedTracker(Name,Value)hbtracker =

vision.HistogramBasedTracker('ObjectHistogram',[])

Properties

Usage

Description

bbox = hbtracker(I)initializeObject function to do this.

[

additionally returns the angle between the x-axis and the major axis

of the ellipse that has the same second-order moments as the object. The returned angle

is between –pi/2 and pi/2.bbox,orientation] = hbtracker(I)

[

additionally returns the confidence score for the returned bounding box that contains

the tracked object. bbox,orientation,score] = hbtracker(I)

Input Arguments

Output Arguments

Object Functions

To use an object function, specify the

System object™ as the first input argument. For

example, to release system resources of a System object named obj, use

this syntax:

release(obj)

Examples

References

[1] Bradsky, G.R. "Computer Vision Face Tracking For Use in a Perceptual User Interface." Intel Technology Journal. January 1998.

Extended Capabilities

Version History

Introduced in R2012a