Detect Overfitting When Training Model for Time Series Forecasting

This topic describes various training options and techniques for reducing overfitting when training deep learning models for time series forecasting tasks.

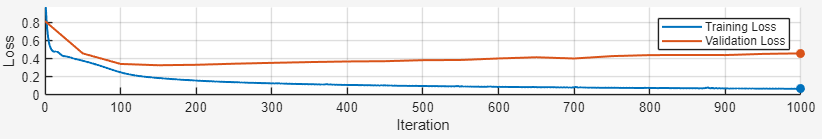

Overfitting occurs when the loss on the validation data is significantly higher than the loss on the training data. The higher validation loss means that the model is overfitting to the training data and might not generalize well when you use it on unseen data. In this figure, you see that the training loss is still decreasing but the validation loss is increasing.

It is difficult to know why a model is overfitting, but the Time Series Modeler app provides a list of general suggestions to help prevent overfitting when training a deep neural network.

Detect and Reduce Overfitting

To detect overfitting, you can look at the training plot. You can also use the Time Series Modeler app to automatically detect overfitting.

During training using the Time Series Modeler app, select the Training Diagnostics panel at the bottom of the app to see if your model is overfitting. It is difficult to determine why a model is overfitting, but the app provides a list of general suggestions to help prevent overfitting when training a deep neural network. This information is available only for deep learning models.

To check for overfitting, at each validation frequency, the app checks by what percentage the

validation loss is higher than the average training loss. The software computes the average

training loss across the last miniBatchSize iterations. If the validation

loss is more than 10% higher than the average training loss for two or more validation

checks in a row, then the model is overfitting.

During training, the overfitting diagnostic indicates one of these states:

Not enough information available — This message appears during early training when the app is unable to determine if overfitting is occurring. You will also see this message if you have not specified any validation data.

No issues detected — This message means that the app found no signs of overfitting. The validation loss does not exceed the training loss by more than 10% for two consecutive validation checks.

Overfitting — This message means the app has detected overfitting.

If your model is overfitting, the app suggests these fixes:

| Fix | Where to Fix | Details |

|---|---|---|

| Increase the L2 regularization factor. For example, try increasing by a factor of 10. | In the Training Options section, select Show Advanced options. Then, expand Overfitting and change the L2Regularization training option. | Increasing L2 regularization penalizes large weights, encouraging

simpler models that generalize better to new data. For more information,

see L2Regularization. |

| Increase the dropout probability. For example, try increasing by 0.1. | Change the Dropout probability to a value greater than 0. This change is equivalent to adding dropout layers after each of the network blocks. | Adding dropout layers randomly deactivates neurons during training,

which prevents the model from becoming overly reliant on specific

pathways. For more information, see dropoutLayer |

| Use a decreasing learning rate schedule. For example, try using piecewise, polynomial, exponential, or cosine. | In the Training Options section, select

Show Advanced options. Then, expand

Learn Rate and change the

LearnRateSchedule training option. The

decreasing learn rate schedules are piecewise,

polynomial, exponential, and

cosine. | Using a decreasing learning rate schedule allows the model to make

smaller, more precise weight updates as training progresses. This type

of schedule helps the model to converge more smoothly and avoid

overshooting optimal solutions. For more information, see LearnRateSchedule. |

| Decrease the number of hidden units. For example, try decreasing the number of hidden units by 10%. | Reduce the Hidden units value. | Reducing the number of learnable parameters limits the capacity of the model to memorize training data, encouraging the model to capture only the most essential patterns that generalize well to new data. |

| Use a smaller network architecture. | In the Model gallery, select a different network. | Changing the network architecture allows you to choose a model that can learn relevant patterns while avoiding excessive memorization of training data. For example, choose one of the Small networks, which have fewer learnable parameters. |