imfindcirclesYOLO

Syntax

Description

Add-On Required: This feature requires the Image Processing Toolbox Model for Circle Detection add-on.

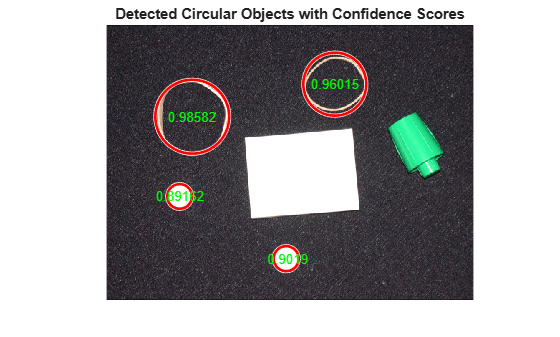

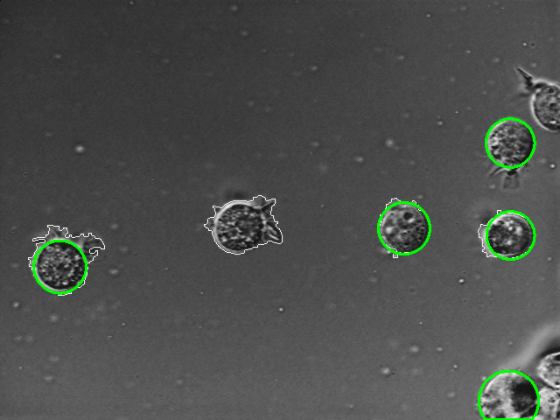

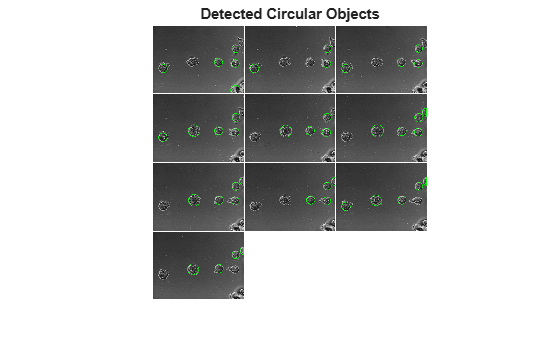

centers = imfindcirclesYOLO(img,Name=Value)Method="yolox-small" to find circles using the pretrained YOLOX-Small

model.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Algorithms

The pretrained YOLOX networks are trained on a curated subset of images from the Landscapes HQ data set [2].

References

[1] Ge, Zheng, Songtao Liu, Feng Wang, Zeming Li, and Jian Sun. “YOLOX: Exceeding YOLO Series in 2021.” arXiv, August 5, 2021. https://arxiv.org/abs/2107.08430.

[2] Skorokhodov, Ivan, Grigorii Sotnikov, and Mohamed Elhoseiny. “Aligning Latent and Image Spaces to Connect the Unconnectable.” arXiv:2104.06954. Preprint, arXiv, April 14, 2021. https://doi.org/10.48550/arXiv.2104.06954.

Version History

Introduced in R2026a