Working with Big Data

This example shows how Simulink® models handle big data as input to and output from a simulation.

Big data refers to data that is too large to load into system memory all at once. Simulink simulations can produce big data as simulation output and consume big data as simulation input. Big data for both input and output is stored in a MAT file on the hard disk. Only small chunks of this data are loaded into system memory at any time during simulation. This approach is known as streaming. Simulink simulations can stream data to and from a MAT file. Streaming solves memory issues because the hard disk capacity of a system is typically much greater than the capacity of the random access memory (RAM).

The software uses logging to file to stream big data as the output of a simulation. Streaming from file then supplies big data as input to a simulation.

This example demonstrates strategies for big data simulations. To reduce the time required to run the example, the example uses a shorter simulation duration and generates less data than most big data simulations.

Open Example Model

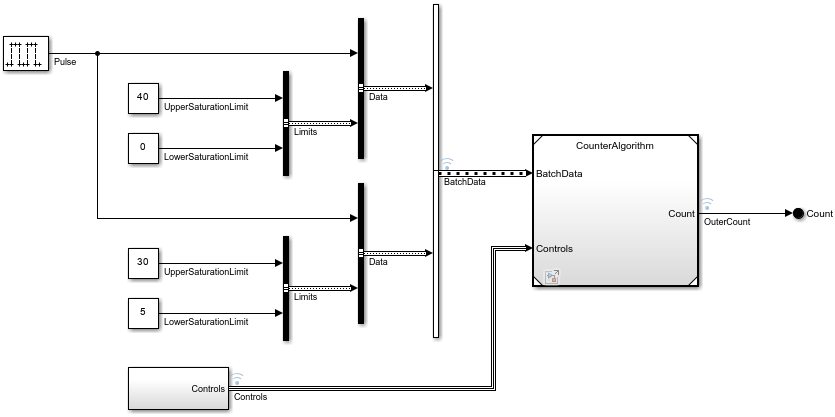

Open the IterativeCounter project. The project opens the CounterSystem model at startup.

openProject("IterativeCounter");To update the line styles, update the model. On the Modeling tab of the Simulink® Toolstrip, click Update Model. You can use the line styles to visually identify buses. Alternatively, in the MATLAB® Command Window, enter this command.

set_param("CounterSystem",SimulationCommand="update")

Set Up Logging to File and Simulate Model

When you call the sim function to simulate the model:

To specify the name of the

Datasetobject to hold the result of signal logging, set theSignalLoggingNameconfiguration parameter to"topOut".To stream output data to a file, enable logging to file by setting the

LoggingToFileconfiguration parameter to"on".To log to a MAT file and specify the name of the resulting file, set the

LoggingFileNameconfiguration parameter to"top.mat".Set the

StopTimeconfiguration parameter to 5000 seconds. Note that the stop time will be a much larger value for most big data simulations, which results in many more data samples to log.

out = sim("CounterSystem", SignalLoggingName="topOut", ... LoggingToFile="on", LoggingFileName="top.mat", StopTime="5000");

Alternatively, to set these configuration parameters before you simulate the model, use the set_param function.

When logging to file is enabled on a model, simulation of that model streams logged signals directly into the file. You can choose to log data to a MAT or MLDATX file. Additionally, if the States or Output configuration parameters are enabled and the Format configuration parameter is set to Dataset, those values are streamed into the same MAT or MLDATX file.

Create DatasetRef Object to Reference Logged Dataset Within MAT File

To reference the resulting Dataset object in the logged MAT file, use a DatasetRef object. By using a DatasetRef object, the referenced MAT file is not loaded into memory. The DatasetRef object is a light wrapper object for referencing a Dataset object that is stored in a file. Alternatively, if you call the load function on this file, the software loads the entire file into memory, which might not be possible if this Dataset object contains big data.

dsr = Simulink.SimulationData.DatasetRef("top.mat","topOut");

Obtain Reference to Logged Signal

You can use {} indexing of DatasetRef objects to reference individual signals within a Dataset object without loading the signals into memory. For example, to reference the second signal, enter this command.

sig2 = dsr{2};The Values field of sig2 is a SimulationDatastore object, which is a lightweight reference to the data of signal 2, stored on disk.

sig2.Values

ans =

SimulationDatastore with properties:

ReadSize: 100

NumSamples: 50001

FileName: '/tmp/Bdoc26a_3233028_433379/tpb52e38ca/simulink-ex81669041/IterativeCounter/top.mat'

Data Preview:

Time Data

_______ ______

0 sec 1 5

0.1 sec 1 5

0.2 sec 2 6

0.3 sec 2 6

0.4 sec 3 7

: :

Obtain More References to Other Logged Signals

You can use some of the logged signals as inputs to the simulation of the referenced model. Create lightweight references for each of the logged signals that connect to the input ports of the Model block. In this example, buses connect to the Model block input ports. The resulting Values fields are structures of SimulationDatastore objects. Each structure reflects the hierarchy of the original bus.

batchdata = dsr{1};

controls = dsr{3};Create Dataset Object to Use as Simulation Input

Specify the input signals to a simulation using a Dataset object. Each element in this Dataset object provides input data to the Inport block that corresponds to the same index. Create an empty Dataset object named ds. Then, place the references to the logged signals into the Dataset object as elements number one and two.

Use {} indexing on the Dataset object to order elements.

ds = Simulink.SimulationData.Dataset;

ds{1} = batchdata;

ds{2} = controls;Within each element of the Dataset object, you can mix references to signal data, such as SimulationDatastore objects, with in-memory data, such as timeseries objects.

To change one of the upper saturation limits from 30 to 37, enter this command.

ds{1}.Values(2).Limits.UpperSaturationLimit = timeseries(int32(37),0);Stream Input Data into Simulation

Open the referenced model CounterAlgorithm as a top model, outside of the context of the model CounterSystem.

open_system("CounterAlgorithm");When you simulate the model CounterAlgorithm, use the Dataset object named ds as input.

out = sim("CounterAlgorithm",LoadExternalInput="on", ... ExternalInput="ds");

The data that is referenced by SimulationDatastore objects is streamed into the simulation without overwhelming the system.

The data for the upper saturation limit is not streamed because that signal is specified as an in-memory timeseries. The change in saturation limit is reflected at around time 6 in the scope. The signal now saturates to a value of 37 instead of 30.

Analyze Logged Data Incrementally in MATLAB

SimulationDatastore objects let you analyze the logged data incrementally in MATLAB. Return to the reference to the second logged signal and assign the datastore to a new variable to simplify access to it.

dst = sig2.Values;

SimulationDatastore objects allow incremental reading of the referenced data. The reading is done in chunks and is controlled by the ReadSize property. The default value for ReadSize is 100 samples. Each sample for a signal is the data logged for a single time step of simulation. Change the ReadSize value to 1000. Each read of the datastore returns a timetable representation of the data.

dst.ReadSize = 1000; tt = dst.read;

Each read on the datastore advances the read counter. You can reset this counter and start reading from the beginning.

dst.reset;

Use SimulationDatastore objects for incremental access to the logged simulation data for big data analysis. You can iterate over the entire data record and chunks.

while dst.hasdata next_chunk = dst.read; end

Consider Longer Simulations

This example shows how logging to persistent storage streams data from the first simulation into a MAT file. A second simulation then streams the data from that file as input.

A more realistic big data example would have a larger value for the model StopTime configuration parameter, resulting in a larger logged MAT file. The second simulation could also be configured for a longer stop time. However, even with the larger data files for output and input, the memory requirements for the longer simulations remain the same.