Train Multiple Agents for Path Following Control

This example shows how to train multiple agents to collaboratively perform path-following control (PFC) for a vehicle. The goal of PFC is to make the ego vehicle travel at a set velocity while maintaining a safe distance from a lead car by controlling longitudinal acceleration and braking, and also while keeping the vehicle traveling along the center line of its lane by controlling the front steering angle. For more information on PFC, see Path Following Control System (Model Predictive Control Toolbox).

Overview

An example that trains a reinforcement learning agent to perform PFC is shown in Train DDPG Agent for Path-Following Control. In that example, a single deep deterministic policy gradient (DDPG) agent is trained to control both the longitudinal speed and lateral steering of the ego vehicle. In this example, you train two reinforcement learning agents — a DDPG agent provides continuous acceleration values for the longitudinal control loop and a deep Q-network (DQN) agent provides discrete steering angle values for the lateral control loop.

The trained agents perform PFC through cooperative behavior and achieve satisfactory results.

Fix Random Number Stream for Reproducibility

The example code might involve computation of random numbers at several stages. Fixing the random number stream at the beginning of some sections in the example code preserves the random number sequence in the section every time you run it, which increases the likelihood of reproducing the results. For more information, see Results Reproducibility.

Fix the random number stream with seed 0 and random number algorithm Mersenne Twister. For more information on controlling the seed used for random number generation, see rng.

previousRngState = rng(0,"twister");The output previousRngState is a structure that contains information about the previous state of the stream. You will restore the state at the end of the example.

Create Environment Object

The environment for this example includes a simple bicycle model for the ego car and a simple longitudinal model for the lead car. The training goal is to make the ego car travel at a set velocity while maintaining a safe distance from lead car by controlling longitudinal acceleration and braking, while also keeping the ego car traveling along the centerline of its lane by controlling the front steering angle.

Load the environment parameters.

multiAgentPFCParams

Open the Simulink® model.

mdl = "rlMultiAgentPFC";

open_system(mdl)

In this model, the two reinforcement learning agents (RL Agent1 and RL Agent2) provide longitudinal acceleration and steering angle signals, respectively.

The simulation terminates when any of the following conditions occur.

(magnitude of the lateral deviation exceeds 1)

(longitudinal velocity of the ego car drops below 0.5).

(distance between the ego and lead car is below zero)

For the longitudinal controller (RL Agent1):

The reference velocity for the ego car is defined as follows. If the relative distance is less than the safe distance, the ego car tracks the minimum of the lead car velocity and driver-set velocity. In this manner, the ego car maintains some distance from the lead car. If the relative distance is greater than the safe distance, the ego car tracks the driver-set velocity. In this example, the safe distance is defined as a linear function of the current ego car longitudinal velocity , that is, . The safe distance determines the tracking velocity for the ego car.

The observations from the environment contain the longitudinal measurements: the velocity error , its integral , and the ego car longitudinal velocity .

The action signal consists of continuous acceleration values between -3 and 2 m/s^2.

The reward , provided at every time step , is

Here, is the acceleration input from the previous time step, and:

if the simulation is terminated, otherwise .

if , otherwise .

For the lateral controller (RL Agent2):

The observations from the environment contain the lateral measurements: the lateral deviation , relative yaw angle , their derivatives and , and their integrals and .

The action signal consists of discrete steering angle actions which take values from -15 degrees (-0.2618 rad) to 15 degrees (0.2618 rad) in steps of 1 degree (0.0175 rad).

The reward , provided at every time step , is

Here, is the steering input from the previous time step, is the acceleration input from the previous time step, and:

if the simulation is terminated, otherwise .

, otherwise .

The logical terms in the reward functions (, , and ) penalize the agents if the simulation terminates early, while encouraging the agents to make both the lateral error and velocity error small.

Create the observation and action specifications for longitudinal control loop.

obsInfo1 = rlNumericSpec([3 1]); actInfo1 = rlNumericSpec([1 1],LowerLimit=-3,UpperLimit=2);

Create the observation and action specifications for lateral control loop.

obsInfo2 = rlNumericSpec([6 1]); actInfo2 = rlFiniteSetSpec((-15:15)*pi/180);

Combine the observation and action specifications as a cell array.

obsInfo = {obsInfo1,obsInfo2};

actInfo = {actInfo1,actInfo2};Create a Simulink environment object, specifying the block paths for both agent blocks. The order of the block paths must match the order of the observation and action specification cell arrays.

blks = mdl + ["/RL Agent1", "/RL Agent2"]; env = rlSimulinkEnv(mdl,blks,obsInfo,actInfo);

Specify a reset function for the environment using the ResetFcn property. The function pfcResetFcn (defined at the end of this example) sets the initial conditions of the lead and ego vehicles at the beginning of every episode during training.

env.ResetFcn = @pfcResetFcn;

Create Agents

For this example you create two reinforcement learning agents. Both agents operate at the same sample time in this example. Set the sample time value (in seconds).

Ts = 0.1;

When you create the agent, the initial parameters of the critic network are initialized with random values. Fix the random number stream so that the agent is always initialized with the same parameter values.

rng(0,"twister");Longitudinal Control

The agent for the longitudinal control loop is a DDPG agent. A DDPG agent approximates the long-term reward given observations and actions using a critic value function representation and selects actions using an actor policy representation. For more information on creating deep neural network value function and policy representations, see Create Actors, Critics, and Policy Objects.

Use the createCCAgent function provided at the end of this example to create a DDPG agent for longitudinal control. The structure of this agent is similar to the Train DDPG Agent for Adaptive Cruise Control example.

agent1 = createACCAgent(obsInfo1,actInfo1,Ts);

Lateral Control

The agent for the lateral control loop is a DQN agent. A DQN agent approximates the long-term reward given observations and actions using a critic value function representation.

Use the createLKAAgent function provided at the end of this example to create a DQN agent for lateral control. The structure of this agent is similar to the Train DQN Agent for Lane Keeping Assist example.

agent2 = createLKAAgent(obsInfo2,actInfo2,Ts);

Train Agents

Specify the training options. For this example, use the following options.

Run each training episode for a maximum of

5000episodes, with each episode lasting a maximum ofmaxstepstime steps.Display the training progress in the Reinforcement Learning Training Monitor dialog box (set the

VerboseandPlotsoptions).Stop the training the DDPG and DQN agents when they receive an average reward greater than

480and1195, respectively. When one agent reaches its stop criteria, it simulates its own policy without learning while the other agent continues training.Do not save simulation data during training to improve performance. To save simulation data set

SimulationStorageTypeto"file"or"memory".

Tf = 60; % simulation time maxepisodes = 500; maxsteps = ceil(Tf/Ts); trainingOpts = rlMultiAgentTrainingOptions( ... AgentGroups={1,2}, ... LearningStrategy="decentralized", ... MaxEpisodes=maxepisodes, ... MaxStepsPerEpisode=maxsteps, ... StopTrainingCriteria="AverageReward", ... StopTrainingValue=[480,1195], ... SimulationStorageType="none");

Fix the random stream for reproducibility.

rng(0,"twister");Train the agents using the train function. Training these agents is a computationally intensive process that takes several minutes to complete. To save time while running this example, load a pretrained agent by setting doTraining to false. To train the agent yourself, set doTraining to true.

doTraining = false; if doTraining % Train the agent. trainingStats = train([agent1,agent2],env,trainingOpts); else % Load pretrained agents for the example. load("rlPFCAgents.mat") end

The following figure shows a snapshot of the training progress for the two agents.

Simulate Agents

Fix the random stream for reproducibility.

rng(0,"twister");To validate the performance of the trained agents, simulate the agents within the Simulink environment. For more information on agent simulation, see rlSimulationOptions and sim.

simOptions = rlSimulationOptions(MaxSteps=maxsteps); experience = sim(env,[agent1, agent2],simOptions);

To demonstrate the trained agent using deterministic initial conditions, simulate the model in Simulink.

e1_initial = -0.4; e2_initial = 0.1; x0_lead = 70; sim(mdl)

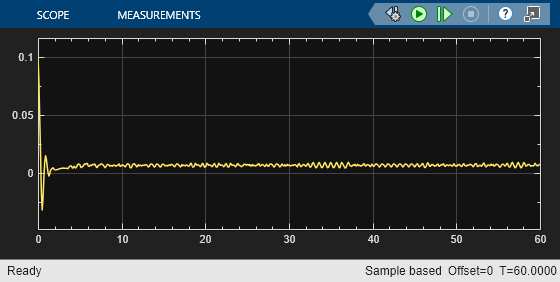

These plots show the results when the lead car is 70 m ahead of the ego car at the beginning of simulation.

The lead car changes speed from 24 m/s to 30 m/s periodically (third plot). The ego car maintains a safe distance throughout the simulation (second plot).

From 0 to 30 seconds, the ego car tracks the set velocity (third plot) and experiences some acceleration (first plot). After that, the acceleration is reduced to 0.

The fourth plot shows the lateral deviation, which greatly decreases within one second and remains less than 0.1 m.

The last plot shows the yaw angle error, which also greatly decreases within one second and remains at very low values.

Local Functions

The sim function calls the reset function at the start of each simulation episode, and the train function calls it at the start of each training episode. The reset function takes as input, and returns as output, a Simulink.SimulationInput (Simulink) object. The output object specifies temporary changes applied to model, which are then discarded when the simulation or training completes. For this example, the function uses the setVariable (Simulink) function to set variable values in the model workspace. For more information, see Reset Function for Simulink Environments.

function in = pfcResetFcn(in) % random value for initial position of lead car in = setVariable(in,'x0_lead',40+randi(60,1,1)); % random value for lateral deviation in = setVariable(in,'e1_initial', 0.5*(-1+2*rand)); % random value for relative yaw angle in = setVariable(in,'e2_initial', 0.1*(-1+2*rand)); end

Helper function for creating a DDPG agent.

function agent = createACCAgent(observationInfo,actionInfo,Ts) L = 48; % number of neurons statePath = [ featureInputLayer(observationInfo.Dimension(1),Name="obsInLyr") fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(L) additionLayer(2,Name="add") reluLayer() fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(1)]; actionPath = [ featureInputLayer(actionInfo.Dimension(1),Name="actInLyr") fullyConnectedLayer(L,Name="fc5")]; criticNetwork = layerGraph(statePath); criticNetwork = addLayers(criticNetwork, actionPath); criticNetwork = connectLayers(criticNetwork,"fc5","add/in2"); criticNetwork = dlnetwork(criticNetwork); critic = rlQValueFunction(criticNetwork,observationInfo,actionInfo, ... ObservationInputNames="obsInLyr", ... ActionInputNames="actInLyr"); actorNetwork = [ featureInputLayer(observationInfo.Dimension(1),Name="obsInLyr") fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(1) tanhLayer() scalingLayer(Name="ActorScaling1",Scale=2.5,Offset=-0.5)]; actorNetwork = dlnetwork(actorNetwork); actor = rlContinuousDeterministicActor( ... actorNetwork, ... observationInfo, ... actionInfo); actorOptions = rlOptimizerOptions( ... LearnRate=1e-4, ... GradientThreshold=1, ... L2RegularizationFactor=1e-4); criticOptions = rlOptimizerOptions( ... LearnRate=1e-3, ... GradientThreshold=1, ... L2RegularizationFactor=1e-4); agentOptions = rlDDPGAgentOptions( ... SampleTime=Ts, ... CriticOptimizerOptions=criticOptions, ... ActorOptimizerOptions=actorOptions, ... MiniBatchSize=128, ... ExperienceBufferLength=1e6); agentOptions.NoiseOptions.StandardDeviation = 0.6; agentOptions.NoiseOptions.StandardDeviationDecayRate = 1e-5; agent = rlDDPGAgent(actor,critic,agentOptions); end

Helper function for creating a DQN agent.

function agent = createLKAAgent(observationInfo,actionInfo,Ts) L = 24; % number of neurons statePath = [ featureInputLayer(observationInfo.Dimension(1),Name="obsInLyr") fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(L) additionLayer(2,Name="add") reluLayer() fullyConnectedLayer(L) reluLayer() fullyConnectedLayer(1)]; actionPath = [ featureInputLayer(actionInfo.Dimension(1),Name="actInLyr") fullyConnectedLayer(L,Name="fc5")]; criticNetwork = layerGraph(statePath); criticNetwork = addLayers(criticNetwork, actionPath); criticNetwork = connectLayers(criticNetwork,"fc5","add/in2"); criticNetwork = dlnetwork(criticNetwork); critic = rlQValueFunction(criticNetwork, ... observationInfo, ... actionInfo, ... ObservationInputNames="obsInLyr", ... ActionInputNames="actInLyr"); criticOptions = rlOptimizerOptions( ... LearnRate=1e-4, ... GradientThreshold=1, ... L2RegularizationFactor=1e-4); agentOptions = rlDQNAgentOptions( ... SampleTime=Ts, ... UseDoubleDQN=true, ... CriticOptimizerOptions=criticOptions, ... MiniBatchSize=64, ... ExperienceBufferLength=1e6); agent = rlDQNAgent(critic,agentOptions); end

See Also

Functions

train|sim|rlSimulinkEnv

Objects

rlDQNAgent|rlDQNAgentOptions|rlDDPGAgent|rlDDPGAgentOptions|rlTrainingOptions|rlSimulationOptions

Blocks

Topics

- Train Multiple Agents for Area Coverage

- Train Multiple Agents to Perform Collaborative Task

- Train DDPG Agent for Adaptive Cruise Control

- Train Hybrid SAC Agent for Path-Following Control

- Adaptive Cruise Control System Using Model Predictive Control (Model Predictive Control Toolbox)

- Create Actors, Critics, and Policy Objects

- Deep Q-Network (DQN) Agent

- Deep Deterministic Policy Gradient (DDPG) Agent

- Train Reinforcement Learning Agents