3-D Vision

3-D vision helps you understand and reconstruct the three-dimensional structure of the world using visual data. With Computer Vision Toolbox™, you can estimate camera poses using epipolar geometry, triangulate 3-D points from multiple views, and refine results through bundle adjustment. The toolbox also supports stereo vision workflows, including stereo camera calibration, image rectification, disparity map computation, and dense 3-D reconstruction. It also provides tools to manage image and point data, build pose graphs, and visualize spatial relationships for accurate 3-D scene reconstruction using multi-view geometry.

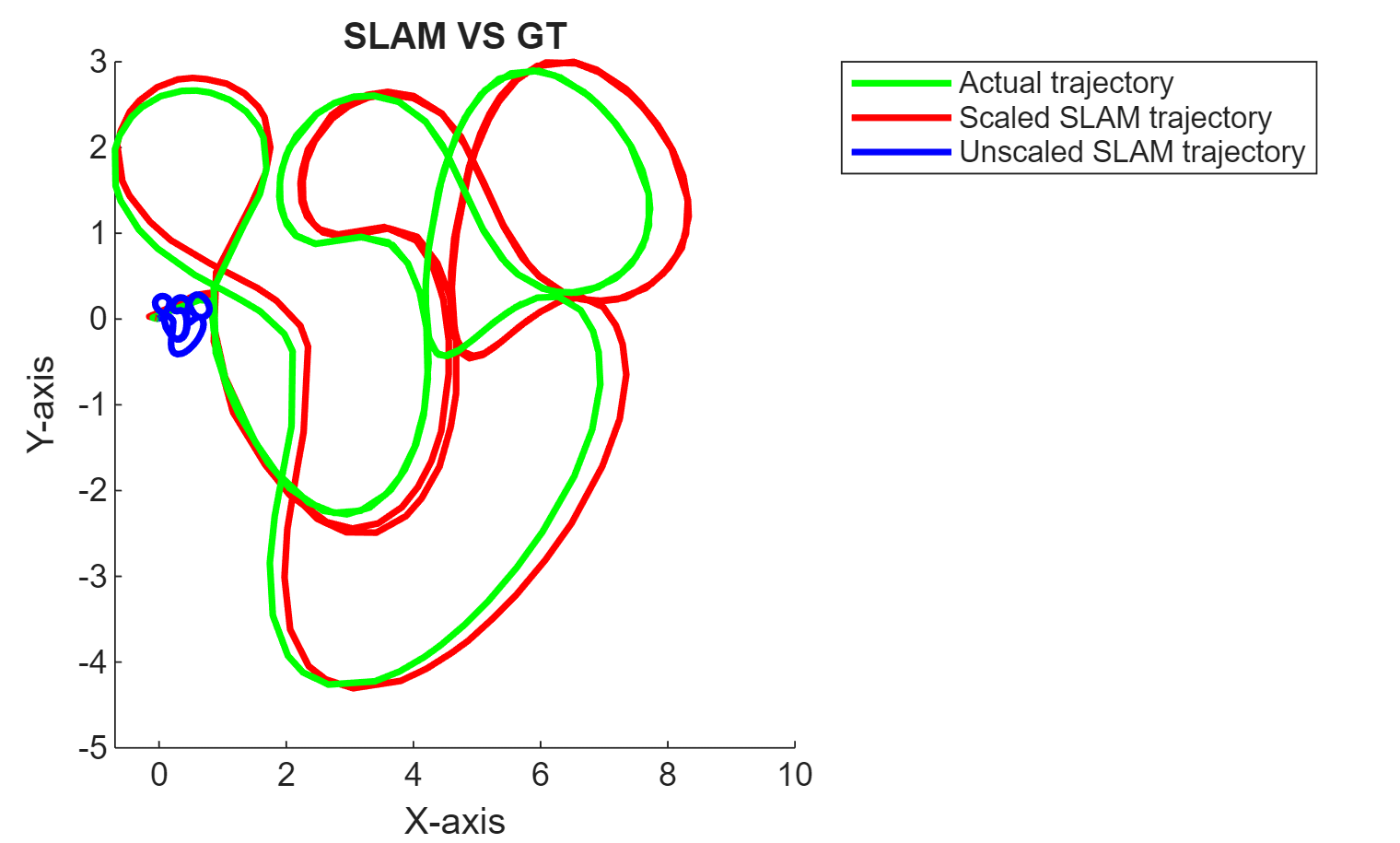

You can use visual SLAM to simultaneously localize a camera and build a map of its surroundings, in real time from monocular, stereo, or RGB-D camera inputs. The toolbox supports inertial sensor fusion with visual SLAM to improve localization accuracy. You can visualize and evaluate accuracy of trajectories, and deploy visual SLAM using code generation.

The toolbox also provides a comprehensive structure from motion (SfM) pipeline, which helps you reconstruct 3-D scenes from multiple 2-D images taken from different viewpoints. You can detect and match features, estimate camera poses, triangulate points, and refine results using bundle adjustment. You can also apply Neural Radiance Fields (NeRF) for dense reconstruction and novel view synthesis.

Lastly, you can also process 3-D point clouds to support mapping, localization, and object modeling. You can preprocess, visualize, register, and fit geometric shapes to point cloud data. The toolbox supports building maps and implementing SLAM algorithms using 3-D point clouds.

Categories

- Camera Pose Estimation and 3-D Reconstruction

Estimate camera poses using foundational epipolar geometry, triangulation and bundle adjustment for 3-D reconstruction

- Stereo Vision

Stereo camera calibration, rectification, disparity estimation, and dense 3-D reconstruction

- Visual SLAM

Real-time visual localization and mapping (vSLAM) with monocular, RGB-D, or stereo cameras and inertial sensor fusion with deployment support

- Structure from Motion

Reconstruct 3-D scene structure from multiple views using incremental structure from motion and NeRF

- Process Point Clouds

Preprocess, visualize, register, fit geometrical shapes, build maps, implement SLAM algorithms, and use deep learning with 3-D point clouds