Hey AI, add computation to my modern physics course. Thanks.

Duncan Carlsmith

Department of Physics, University of Wisconsin-Madison

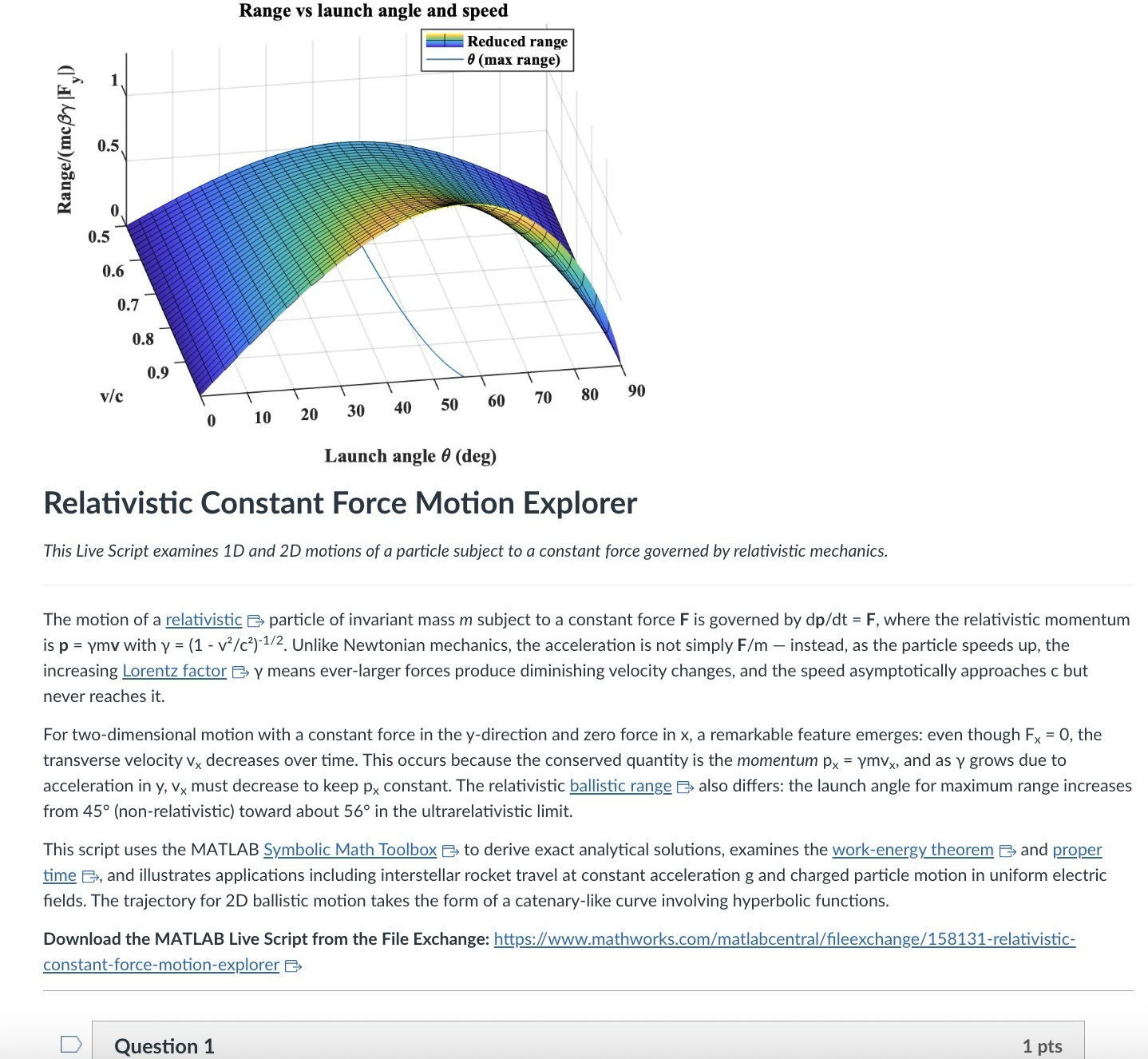

An AI-generated CANVAS quiz header based on a Live Script on relativistic motion.

Introduction

Agentic AI is disrupting higher education. An agentic AI can act on the web rather than relying solely on its training. It can research a topic and produce a credible research paper to specification with validated references. It can create, answer, or assess student work on physics questions from elementary mechanics to graduate-level quantum field theory or quantum computing. It can comprehend, generate, run, and debug a MATLAB Live Script zip package, an HTML5 interactive web application, a JavaScript-enabled website, an ADA-compliant CANVAS site with math and images, or a mobile phone app. A student can authenticate in a learning management system like CANVAS and issue a simple prompt to an agentic AI — “Complete all of my assignments in all of my courses. Thanks.” — and an instructor can, on the other side, with AI assistance and a simple prompt, assess all such submissions, even messy hand-written work. I have demonstrated these capabilities and others.

Here, I’d like to share an experiment leveraging AI to inject computation with MATLAB into a course in modern physics. This may interest the academic readers of this blog and the curious. My prior post Giving All Your Claudes the Keys to Everything introduced my personal agentic AI context.

Live Script goals

Some years back now, I started developing and introducing Live Scripts in a two-semester introductory physics course to immerse students in computation and science without sacrificing the rigor and breadth of the class. These students have essentially no background in computing and are exploring STEM majors — physics, astronomy, and engineering principally. A self-documenting Live Script allows a student to explore even a relatively advanced physics topic and data analysis trick like Fourier analysis or autocorrelation, using data like a mobile phone voice memo or a digital oscilloscope output race that they collect themselves, and then apply the same techniques to analyze big science open data from for example as gravitational wave observatory, both without being mired down in mathematics or code writing. As the course evolves, computational challenges connected to the laboratory component introduce much of the gamut of MATLAB functionality. The goal is to show why and how modeling and assessment using computation are essential in science, and to empower students with practical skills and a sense of what is possible. The traditional lecture/demonstration/homework/discussion format was largely untouched. This course sequence was a five-credit automatic honors course, so extra work was expected. Coding as a tool rather than a chore or vocation is all the more relevant in the AI age.

Assessment strategy

To flexibly direct and assess student work, each Live Script contains a variety of ‘Try this’ suggestions which require the user to adjust a parameter or two and observe the consequences. The student must study the physics described in the background information section, and the code enough to understand how the code logic works, using the supplied comments and URLs to documentation. Tackling a ‘Try this ‘ suggestion does not require any coding, just changing a parameter value, perhaps with a slider. Additionally, the Live Script contains ‘Challenges’ to extend the code in some simple or possibly advanced way. The Live Script can thus serve different customers, and an instructor can further tailor the script and embedded suggestions and challenges as they choose. The possibilities offered are only exemplary.

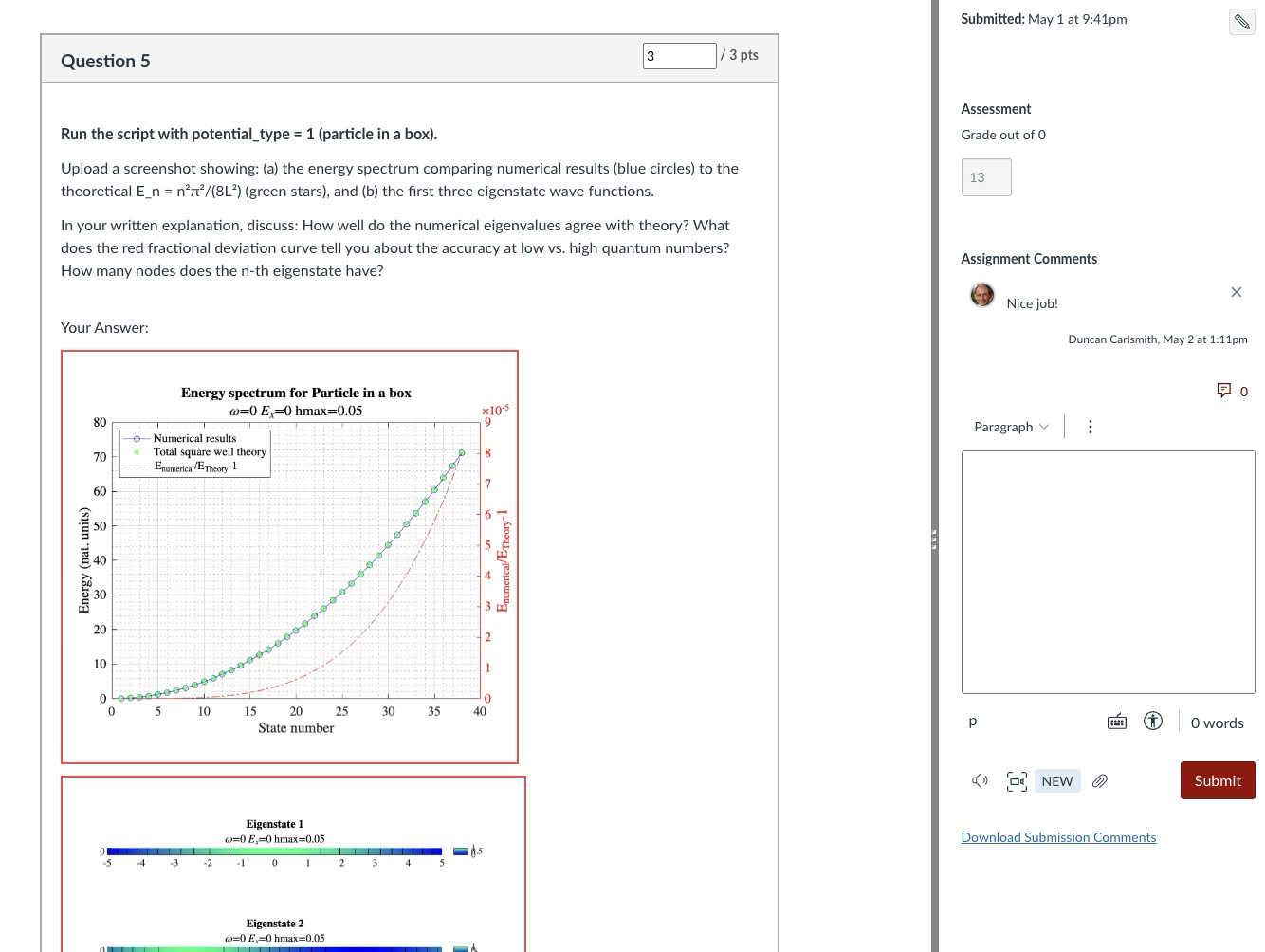

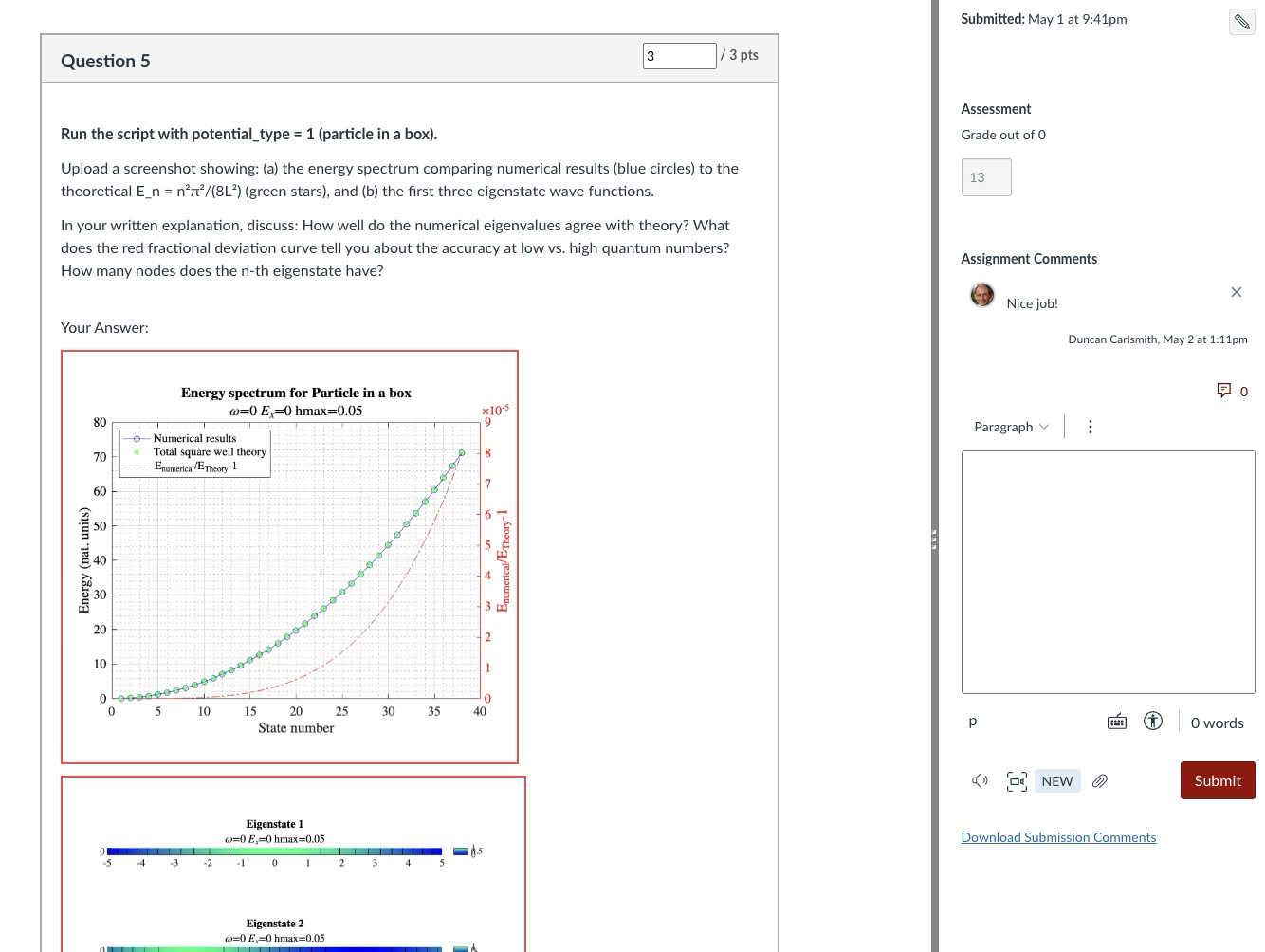

An associated CANVAS quiz contains a few multiple-choice questions related to the ‘Try this’ suggestions, which are auto-graded. Additional questions require the student to upload a product, like an appropriately labeled plot comparing data to a model fit, together with a written explanation. These are readily graded electronically using CANVAS SpeedGrader, with or without an e-rubric. The emphasis is on results and analysis, not on coding facility or style. By design, the burden on the instructor is minimal.

AI-generated computational thread

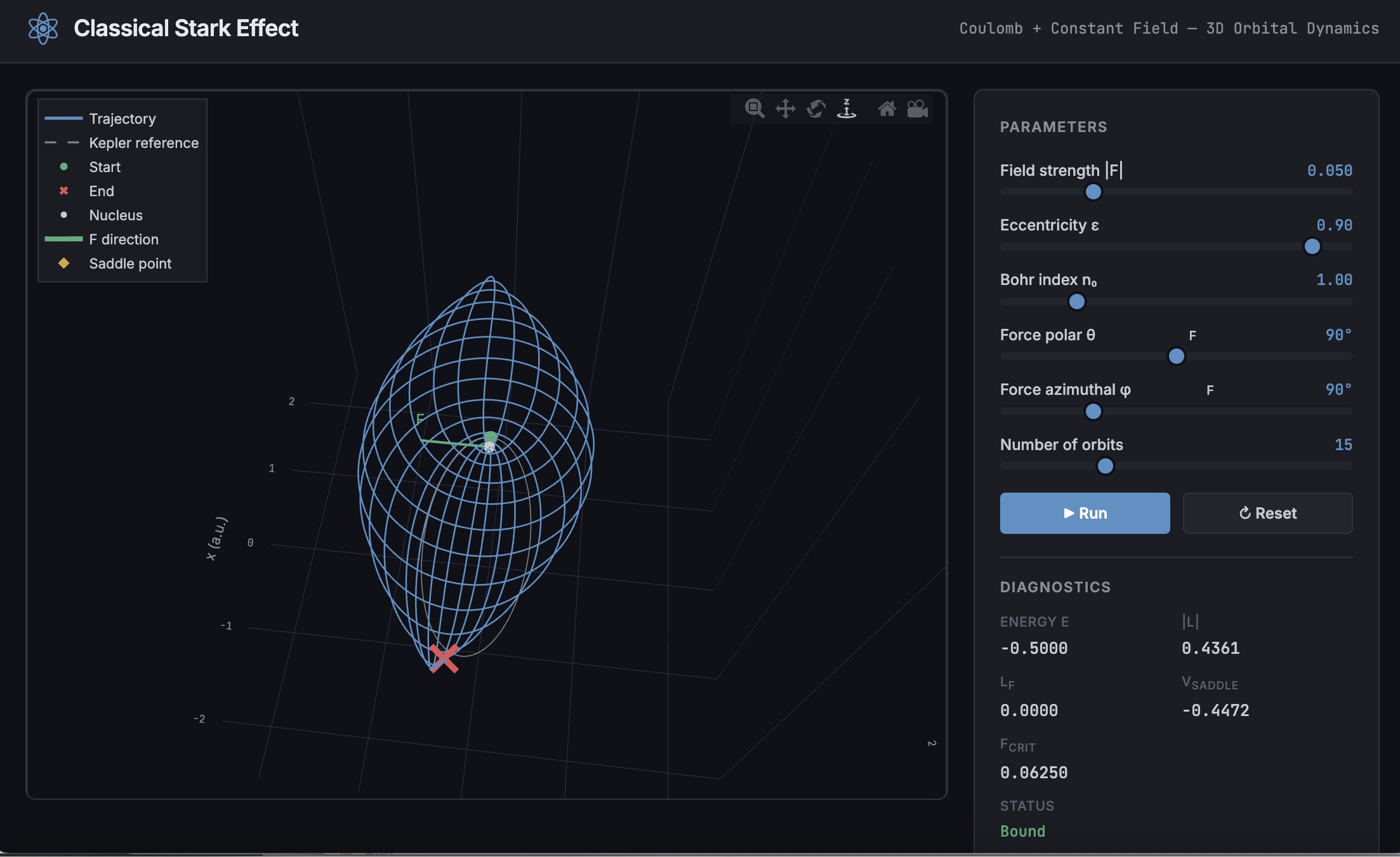

In teaching a 3-credit third-semester survey of modern physics (relativity, quantum mechanics, atomic, molecular, solid state, nuclear, particle, and astro physics) without a lab and again for students with little or no prior exposure to computation, I needed first to develop more advanced, relevant Live Scripts. This course offers three lecture hours per week, rife with live demonstrations of cathode ray tubes, electron diffraction, Geiger counters and sources, thermal radiation, the photoelectric effect, gas discharge tubes observed with diffraction glasses, lasers, and magnetic levitation with diamagnetic and high temperature superconductors, and so on. An additional mandatory hour per week is dedicated to small group active learning in sectional meetings. A contemporary e-text and integrated WebAssign homework system are linked via LTI to CANVAS. These components address learning goals I am loath to sacrifice. I ultimately decided to make the new computational thread an attractive extra-credit option (in parallel with a research paper option) and implemented it with AI assistance mid-stream this semester in a way that could be emulated.

The agent was Claude Desktop running with MCP servers: the Playwright browser-automation server (for CANVAS interaction via authenticated browser session), MATLAB MCP server to run MATLAB, and a filesystem server (for reading local Live Script packages and writing artifacts back to disk). I asked Claude to survey my modern physics syllabus on CANVAS and my 150+ Live Scripts on the MATLAB File Exchange (FEX), and to identify those relevant to a 3rd-semester course in relativity, quantum mechanics, atomic, molecular, solid state, nuclear, particle, and astro physics, with my Introduction to MATLAB script included as a foundations option. Claude returned an initial list of 38 candidate scripts. I removed two that were not a good fit and approved 14, including chaos in relativistic mechanics, relativistic motion in a Coulomb field, numerical solutions to the Schrödinger equation in 1D/2D/3D via the PDE Toolbox, gravitational-wave data analysis, exoplanet transit detection, and clustering in Gaia mission stellar data, among others. For each approved script, Claude downloaded the FEX zip via MATLAB websave and unzip, converted the .mlx to readable .m text via matlab.internal.liveeditor.openAndConvert, ran the key numerical sections in MATLAB to obtain concrete answer values, and then used a single Playwright browser_evaluate call — authenticated by the CSRF token from the active CANVAS browser cookie — to POST a new quiz plus all of its questions to the CANVAS REST API in one round trip. (The MATLAB webwrite path with a CANVAS_API_TOKEN environment variable consistently returned 401 in our testing; the browser-session approach worked reliably for all 14 quizzes.)

Each quiz is structured identically: a description block with the FEX thumbnail image, a two- to three-paragraph physics introduction essentially copied from the FEX page or script itself with Wikipedia links to technical terms, a download link, and an “Open in MATLAB Online” link; followed by 4 multiple-choice questions worth 1 pt each (covering a fundamental physics fact, a physical mechanism, an experimental or computational technique, and a data-analysis concept), and 3 essay questions worth 3 pts each (a basic execution + screenshot, a quantitative comparison, and a bonus “Try this” modification). The essay type was deliberate: a CANVAS file_upload question accepts only a file, while an essay question gives the student a Rich Content Editor in which they can paste a screenshot directly from the clipboard and type their analysis in the same field. SpeedGrader then shows everything together. We also added an optional 0-credit student feedback question that we crafted jointly. Total: 13 points per quiz.

Speed Grader view of a Rich Content Editor question with uploaded results

The full set of 14 quizzes was created in a single working session. I reviewed and accepted the results essentially without revision — a few quiz descriptions needed a follow-up PUT to fix image sizing or to add the MATLAB Online link, but no question content required rewriting. Across the session, the procedure crystallized into a reusable SKILL.md that documents the FEX-to-CANVAS recipe end to end (download with MATLAB, design questions in the four-category MC pattern, batch quiz + question creation, verification checklist).

An AI touch on grading made the assignment fit the course without inflating its weight: a 5% group weight on the Computation category, with a drop-lowest-eleven-of-fourteen rule that keeps each student’s top three quizzes. Each quiz is 13 points, so the maximum contribution is (39/39) × 5% = 5.00% extra credit, and any student can attempt as few or as many as they wish without exceeding that cap. The CANVAS configuration is non-trivial in a few ways and includes one gotcha worth knowing about; details are in Appendix A.

Outcomes

I received about 75 submissions, with 30 of the 75 students participating, and many others opting for the research paper. Feedback was generally positive. Only a few students ran into difficulty: one suffered a European Space Agency network outage while accessing Gaia data, and another had trouble with a screen-capture process unrelated to MATLAB. Students reported workloads in an appropriate 1–3 hour range per assignment. Only about 20% of submitters elected to submit the (quite lengthy) Introduction to MATLAB assignment for credit; some likely encountered MATLAB already in the math department or engineering school, where it is used extensively, and others may have reviewed the assignment but elected not to submit because the upload questions concerned image processing (compression and decompression, blurring and deblurring) rather than course-relevant topics. Several students volunteered that these exercises were more informative and fun than their canonical problem-solving exercises.

Lessons

A few patterns from this experiment seem worth carrying forward. First, the ‘Try this’ design pattern that I had already adopted turns out to be unusually well suited to AI-assisted assessment: each suggestion converts almost mechanically into a three-part question (run, capture, analyze) with a defensible rubric; Hence one working session yielded a full term’s worth of quizzes. Second, the agentic build is a short, explicit recipe — read the script, run the calculations, design the questions, post via the CANVAS API in one batched call — that other instructors can replicate and which is now captured for me in a SKILL.md. Third, the Canvas grading mechanics (drop-lowest, keep-best-three, group weight cap) let extra-credit work scale gracefully: students self-select breadth versus depth, and the instructor’s exposure to grading volume is bounded.

Conclusions

More broadly, I expect education to become more efficient and engaging in this AI age, with much of the routine instructional and learning burden relegated to AI. Frontier AIs can affordably tutor undergraduate students and even PhDs at their level and challenge them in new ways and at scale. Students and instructors both must develop and adjust to new learning strategies and expectations. Documented exploration enabled by interactive, code-aware artifacts like Live Scripts and Jupyter notebooks, created by a student or researcher collaboratively with AIs and other compatriots, may play an ever more important role in this environment.

My SKILL.md is 665 lines and specific to my setup, so not shared here. You might be asking an AI to install Chromium and Playwright or Puppeteer and do all the work in its container. You might be electing a different assignment structure, accessing your own Live Scripts or Python equivalent located at GitHub or someplace other than the MATLAB FEX. This article documents most of what is in my skill file and would be useful background information. You will want to develop and test your own process if emulating the idea here.

Acknowledgements and disclosure

The products described here and this essay were prepared with the assistance of Claude.ai. The author declares he has no financial interest in Anthropic or MathWorks.

Appendix A: CANVAS gradebook configuration

The intent was simple to state: a student who completes three or more MATLAB quizzes at full marks should receive the full 5% extra-credit boost on their course total; a student who completes one quiz at full marks should receive one-third of that boost; a student who attempts none should receive nothing. Implementing this in CANVAS took three coordinated pieces, each of which is straightforward in isolation but has at least one non-obvious failure mode.

A.1 Group structure and drop rule. The 14 quizzes live in a single assignment group named “Computation,” weighted at 5% of the course grade. The group has one rule: drop the lowest 11 scores. With 14 assignments and 11 dropped, CANVAS keeps each student’s top three. Each quiz is worth 13 points (4 multiple-choice at 1 pt + 2 essay at 3 pts + 1 bonus essay at 3 pts), so the maximum sum across the kept three is 39, and the maximum group percentage is 39/39 = 100%, contributing 0.05 × 100% = 5.00% to the course total. The group weight thus acts as a hard ceiling: no matter how many quizzes a student attempts, their boost cannot exceed 5%.

A.2 Treating ungraded as zero, selectively. Out of the box, CANVAS treats ungraded assignments as ignored rather than as zero. This is usually the right default — a student who has not yet attempted an assignment is not penalized for it — but it interacts badly with the design intent here. If a student attempted exactly one MATLAB quiz and scored 13/13, CANVAS would show their Computation group total as 13/13 = 100%, awarding the full 5% boost for a single quiz. To get the intended scaling (one quiz at 13/13 should yield 13/39 = 33.33%, contributing 1.67% rather than 5%), the unattempted quizzes must count as zero in the group calculation.

The simplest way to enforce that globally is the gradebook setting Treat Ungraded as 0, but this applies course-wide and was undesirable in my case because of an exam-administration mixup in which different students had taken different versions of Exam 1; only the version each student took should count toward their exam grade, and a global “treat ungraded as 0” would have penalized students for the version they had not been assigned. The per-assignment alternative is to use the gradebook column menu (the three-dot menu on each assignment column) and choose Set Default Grade, entering 0 with the “Overwrite already-entered grades” box left unchecked. This converts every dash in that column to a 0 while leaving real scores untouched, and only affects the assignment whose menu was used. Applied to each of the 14 MATLAB quizzes, this gives the desired “ungraded as zero” behavior in the Computation group without affecting Exam 1 or any other category. After the fix, the worked examples behave as expected..

A.3 The points_possible gotcha. When a CANVAS Classic Quiz is created via the REST API and the quiz’s questions are POSTed in subsequent calls (or even, as in our case, in the same browser_evaluate call but as separate POST requests), the assignment row that mirrors the quiz in the gradebook can retain points_possible = 0 even though the questions internally sum to 13. The quiz preview displays the question points correctly, the quiz statistics show the correct totals, but the gradebook column header reads “Out of 0” and the group percentage calculation collapses to nonsense. Symptomatically, a student with one real score appeared at 30.77% in the Computation column when they should have been at 33.33% — the column was contributing 4/13 instead of 4/39 because 13 of the 14 columns were silently weightless.

The cure is to force CANVAS to recompute the assignment row’s points_possible from the question sum. The simplest way is per-quiz from the UI: open the quiz, click Edit, scroll to the bottom of the editor without changing anything, and click Save (not “Save & Publish” if the quiz is already published). The act of saving the quiz triggers the recompute. The same effect is available via the API by issuing PUT /api/v1/courses/{course_id}/assignments/{assignment_id} with body {"assignment": {"points_possible": 13}} on each affected assignment, which is faster for batch use.

The lesson for anyone scripting CANVAS quiz creation: after batch-creating quizzes and questions via the API, always verify the gradebook column header reads “Out of N” with N matching the question sum, and apply one of the two cures above before students start submitting. The skill file used in this project now flags this check explicitly.