Come iniziare con le previsioni delle serie temporali

Questo esempio mostra come creare una rete semplice con memoria a breve e lungo termine (LSTM) per prevedere i dati delle serie temporali utilizzando l'app Deep Network Designer.

Una rete LSTM è una rete neurale ricorrente (RNN) che elabora i dati di input eseguendo un loop sulle fasi temporali e aggiornando lo stato RNN. Lo stato RNN contiene le informazioni ricordate in tutte le fasi temporali precedenti. È possibile utilizzare una rete neurale LSTM per prevedere i valori successivi di una serie o di una sequenza temporale utilizzando come input le fase temporali precedenti.

Caricamento dei dati sequenziali

Caricare i dati di esempio da WaveformData. Per accedere a questi dati, aprire l'esempio come script live. Il set di dati waveform contiene forme d'onda di varia lunghezza generate sinteticamente con tre canali. L'esempio addestra una rete neurale LSTM a prevedere i valori futuri delle forme d'onda in base ai valori dati dalle fasi temporali precedenti.

load WaveformDataVisualizzare alcune delle sequenze.

idx = 1;

numChannels = size(data{idx},2);

figure

stackedplot(data{idx},DisplayLabels="Channel " + (1:numChannels))Per questo esempio, utilizzare la funzione di aiuto prepareForecastingData, allegata a questo esempio come file di supporto, per preparare i dati per l'addestramento. Questa funzione prepara i dati utilizzando i seguenti passaggi:

Per prevedere i valori delle fasi temporali future di una sequenza, specificare i target come sequenze di addestramento e con valori spostati di una fase temporale. In ciascuna fase temporale della sequenza di input, la rete neurale LSTM impara a prevedere il valore della fase temporale successiva. Non includere la fase temporale finale nelle sequenze di addestramento.

Suddividere i dati in un set di addestramento contenente il 90% dei dati e un set di test contenente il 10% dei dati.

[XTrain,TTrain,XTest,TTest] = prepareForecastingData(data,[0.9 0.1]);

Per un migliore adattamento e per evitare che l'addestramento diverga, è possibile normalizzare i predittori e i target in modo che i canali abbiano una media pari a zero e una varianza unitaria. Quando si effettuano le previsioni, è necessario normalizzare anche i dati di test utilizzando le stesse statistiche dei dati di addestramento. Per ulteriori informazioni, vedere .

Definizione dell’architettura di rete

Per costruire la rete, aprire l'app Deep Network Designer.

deepNetworkDesigner

Per creare una rete sequenziale, nella sezione Sequence-to-Sequence Classification Networks (Untrained) (Reti di classificazione da sequenza a sequenza (non addestrate)), fare clic su LSTM.

In questo modo si apre una rete precostituita, indicata per i problemi di classificazione delle sequenze. È possibile convertire la rete di classificazione in una rete di regressione modificando i livelli finali.

Per prima cosa, eliminare il livello softmax.

Quindi, regolare le proprietà dei livelli in modo che siano adatti al set di dati waveform. Poiché l'obiettivo è prevedere i punti futuri di una serie temporale, la grandezza dell'output deve essere uguale a quella dell'input. In questo esempio, i dati di input hanno tre canali di input; quindi, anche l'output della rete deve avere tre canali di output.

Selezionare il livello di input di sequenza input e impostare InputSize (Grandezza dell'input) su 3.

Selezionare il livello completamente connesso fc e impostare OutputSize (Grandezza dell'output) su 3.

Per verificare che la rete sia pronta per l’addestramento, fare clic su Analyze (Analizza). Il Deep Learning Network Analyzer non riporta errori o avvisi, quindi la rete è pronta per l'addestramento. Per esportare la rete, fare clic su Export (Esporta). L'applicazione salva la rete nella variabile net_1.

Specificazione delle opzioni di addestramento

Specificare le opzioni di addestramento. La scelta tra le opzioni richiede un'analisi empirica. Per scoprire le diverse configurazioni delle opzioni di addestramento eseguendo esperimenti, è possibile utilizzare l'applicazione Experiment Manager. Poiché i livelli ricorrenti elaborano i dati sequenziali una fase temporale alla volta, qualsiasi riempimento nelle fasi temporali finali può influenzare negativamente l'output del livello. Riempire o troncare i dati sequenziali a sinistra impostando l'opzione SequencePaddingDirection su "left".

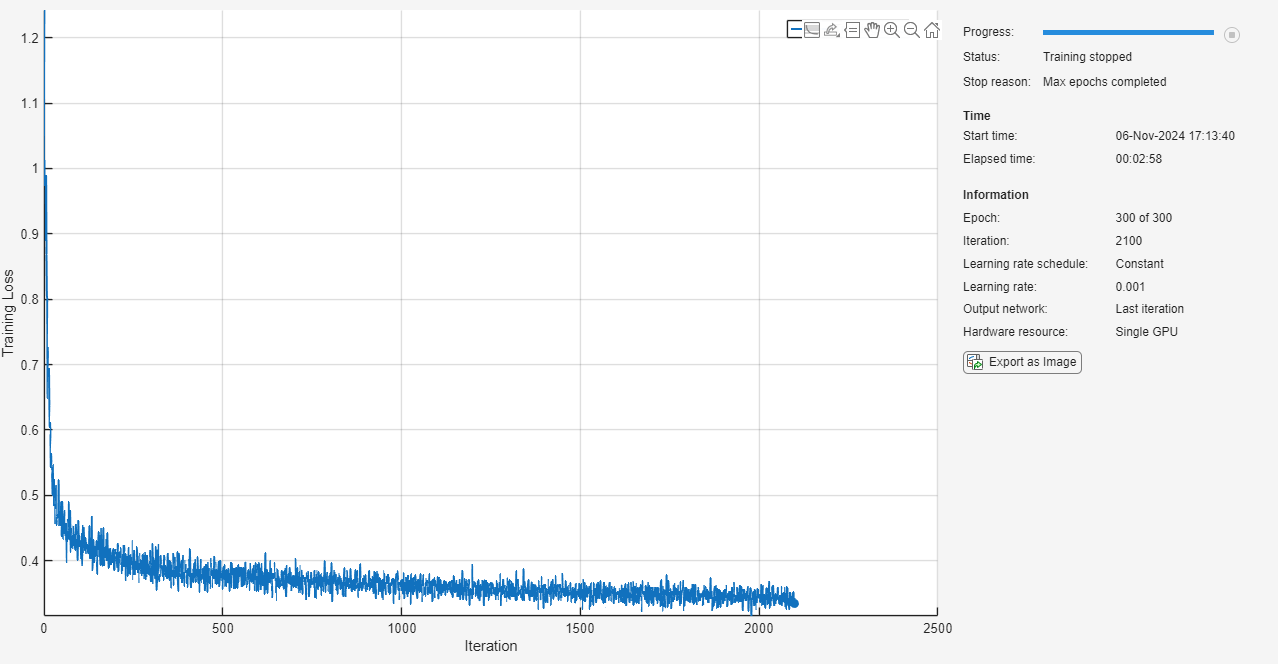

options = trainingOptions("adam", ... MaxEpochs=300, ... SequencePaddingDirection="left", ... Shuffle="every-epoch", ... Plots="training-progress", ... Verbose=false);

Addestramento di reti neurali

Addestrare la rete neurale utilizzando la funzione trainnet. Poiché l'obiettivo è la regressione, utilizzare la perdita dell'errore quadratico medio (MSE).

net = trainnet(XTrain,TTrain,net_1,"mse",options);

Previsione delle fasi temporali future

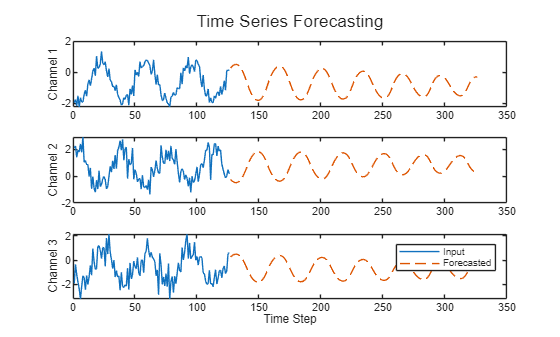

La previsione a loop chiuso prevede le fasi temporali successive di una sequenza utilizzando le previsioni precedenti come input.

Selezionare la prima osservazione di prova. Inizializzare lo stato della RNN resettando lo stato utilizzando la funzione resetState. Quindi, utilizzare la funzione predict per fare una previsione iniziale Z. Aggiornare lo stato della RNN utilizzando tutte le fasi temporali dei dati di input.

X = XTest{1};

T = TTest{1};

net = resetState(net);

offset = size(X,1);

[Z,state] = predict(net,X(1:offset,:));

net.State = state;Per prevedere ulteriori previsioni, eseguire un loop sulle fasi temporali e fare le previsioni utilizzando la funzione predict e il valore previsto per la fase temporale precedente. Dopo ciascuna previsione, aggiornare lo stato della RNN. Prevedere le successive 200 fasi temporali passando iterativamente il precedente valore previsto alla RNN. Poiché la RNN non ha bisogno dei dati di input per fare ulteriori previsioni, è possibile specificare un numero qualsiasi di fasi temporali da prevedere. L'ultima fase temporale della previsione iniziale è la prima fase temporale prevista.

numPredictionTimeSteps = 200; Y = zeros(numPredictionTimeSteps,numChannels); Y(1,:) = Z(end,:); for t = 2:numPredictionTimeSteps [Y(t,:),state] = predict(net,Y(t-1,:)); net.State = state; end numTimeSteps = offset + numPredictionTimeSteps;

Confrontare le previsioni con i valori di input.

figure l = tiledlayout(numChannels,1); title(l,"Time Series Forecasting") for i = 1:numChannels nexttile plot(X(1:offset,i)) hold on plot(offset+1:numTimeSteps,Y(:,i),"--") ylabel("Channel " + i) end xlabel("Time Step") legend(["Input" "Forecasted"])

Questo metodo di previsione è chiamato previsione a loop chiuso. Per ulteriori informazioni sulla previsione delle serie temporali e sull'esecuzione di previsioni a loop aperto, vedere Previsione delle serie temporali tramite il Deep Learning.

Vedi anche

dlnetwork | trainingOptions | trainnet | scores2label | Deep Network Designer