Sensor Models for Unreal Engine Simulation

Simulink® 3D Animation™ provides sensor models that generate synthetic data from the 3D environment. A sensor is an actor in the 3D environment that models a sensor to generate information about the 3D environment. You can view the 3D environment in the Simulation 3D Viewer through the MATLAB® and Simulink interfaces of Simulink 3D Animation. The simulation environment is visualized using the Unreal Engine® from Epic Games®.

Sensor Data Generation

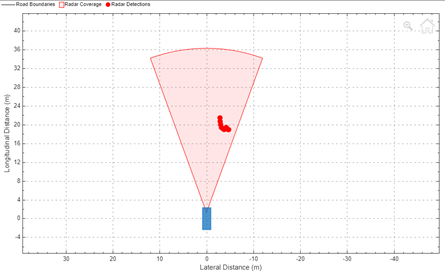

Sensor models use either ray tracing or rendering of the Unreal Engine to generate sensor data from the 3D environment.

Sensor models that use ray tracing interrogate the environment with directed rays that intersect objects. The rays from the sensor detects intersections as the ray travels through the scene.

Sensor models that use rendering return images with photorealism, depth, and semantic data from the 3D environment for object detection.

The sensor returns data from the 3D environment to MATLAB or Simulink according to the sample time of the sensor. Due to the lock-step mechanism, MATLAB or Simulink provides the sensor data as output in the next simulation time step. Therefore, the sensor data output is delayed by one time step.

Sensor Models in Simulink 3D Animation

You can create sensors for 3D simulation using Simulation 3D blocks in

Simulink or sim3d objects and functions in MATLAB. The table summarizes the sensor blocks and classes that you can use for

3D simulation.

| Sensor | Description | Sensor Data |

|---|---|---|

|

Note The To Video display block requires Computer Vision Toolbox™. | |

|

Note The To Video display block requires Computer Vision Toolbox. For an example on how to visualize image, depth, and semantic data, see Import RoadRunner Scene into Unreal Engine Using Simulink. | |

|

Note

| |

|

| |

|

| |

|

| |

|

| |

|

Note

|

References

[1] Scaramuzza, D., A. Martinelli, and R. Siegwart. "A Toolbox for Easy Calibrating Omnidirectional Cameras." Proceedings to IEEE International Conference on Intelligent Robots and Systems (IROS 2006). Beijing, China, October 7–15, 2006.

See Also

sim3d.World | sim3d.sensors.IdealCamera | sim3d.sensors.MainCamera | sim3d.sensors.Camera | sim3d.sensors.FisheyeCamera | sim3d.sensors.RaytraceSensor | sim3d.sensors.Lidar | sim3d.sensors.UltrasonicSensor | Simulation 3D Scene

Configuration | Simulation 3D Camera

Get | Simulation 3D

Camera | Simulation 3D Fisheye

Camera | Simulation 3D Ray

Tracer | Simulation 3D

Lidar | Simulation 3D

Ultrasonic Sensor | Simulation 3D

Ultrasonic Array | Simulation 3D Radar

Data Generator

Topics

- Coordinate Systems in Simulink 3D Animation

- Generate Depth, Semantic, and Point Cloud Data Using MATLAB

- Apply Labels to Unreal Scene Elements for Semantic Segmentation and Object Detection

- Import RoadRunner Scene into Unreal Engine Using Simulink

- Automate Virtual Assembly Line with Two Robotic Workcells