estimateCameraMatrix

(Not recommended) Estimate camera projection matrix from world-to-image point correspondences

estimateCameraMatrix is not recommended. Use the estimateCameraProjection function instead. For more information, see Version History.

Syntax

Description

camMatrix = estimateCameraMatrix(imagePoints,worldPoints)

[

also returns the reprojection error that quantifies the accuracy of the projected image

coordinates.camMatrix,reprojectionErrors] = estimateCameraMatrix(imagePoints,worldPoints)

Examples

Input Arguments

Output Arguments

Tips

You can use the estimateCameraMatrix function to estimate a camera projection

matrix:

If the world-to-image point correspondences are known, and the camera intrinsics and extrinsics parameters are not known, you can use the

cameraMatrixfunction.To compute 2-D image points from 3-D world points, refer to the equations in

camMatrix.For use with the

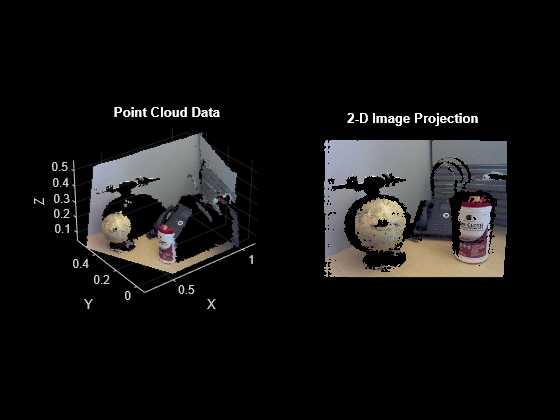

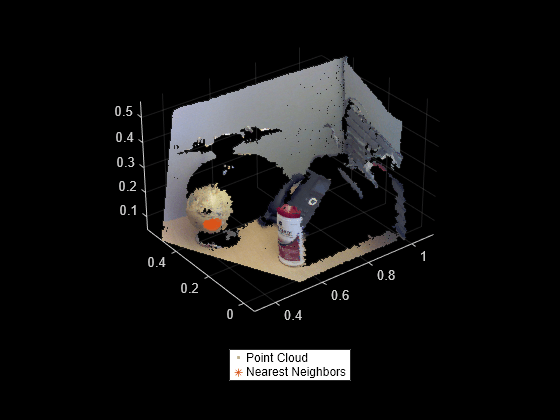

findNearestNeighborsobject function of thepointCloudobject. The use of a camera projection matrix speeds up the nearest neighbors search in a point cloud generated by an RGB-D sensor, such as Microsoft® Kinect®.

Algorithms

Given the world points X and the image points x, the camera projection matrix C, is obtained by solving the equation

λx = CX.

The equation is solved using the direct linear transformation (DLT) approach [1]. This approach formulates a homogeneous linear system of equations, and the solution is obtained through generalized eigenvalue decomposition.

Because the image point coordinates are given in pixel values, the approach for computing

the camera projection matrix is sensitive to numerical errors. To avoid numerical errors, the

input image point coordinates are normalized, so that their centroid is at the origin. Also,

the root mean squared distance of the image points from the origin is

sqrt(2). These steps summarize the process for estimating the camera

projection matrix.

Normalize the input image point coordinates with transform T.

Estimate camera projection matrix CN from the normalized input image points.

Compute the denormalized camera projection matrix C as CNT-1.

Compute the reprojected image point coordinates xE as CX.

Compute the reprojection errors as

reprojectionErrors = |x− xE|.

References

[1]

Version History

Introduced in R2019aSee Also

Apps

Objects

stereoParameters|cameraCalibrationErrors|intrinsicsEstimationErrors|extrinsicsEstimationErrors|cameraIntrinsics

Functions

estimateCameraProjection|estimateCameraParameters|showReprojectionErrors|showExtrinsics|undistortImage|detectCheckerboardPoints|generateCheckerboardPoints|cameraProjection|estworldpose|estimateEssentialMatrix|estimateFundamentalMatrix|findNearestNeighbors

Topics

- Evaluating the Accuracy of Single Camera Calibration

- Structure from Motion from Two Views

- Structure from Motion from Multiple Views

- Depth Estimation from Stereo Video

- Code Generation for Depth Estimation from Stereo Video

- What Is Camera Calibration?

- Using the Single Camera Calibrator App

- Using the Stereo Camera Calibrator App